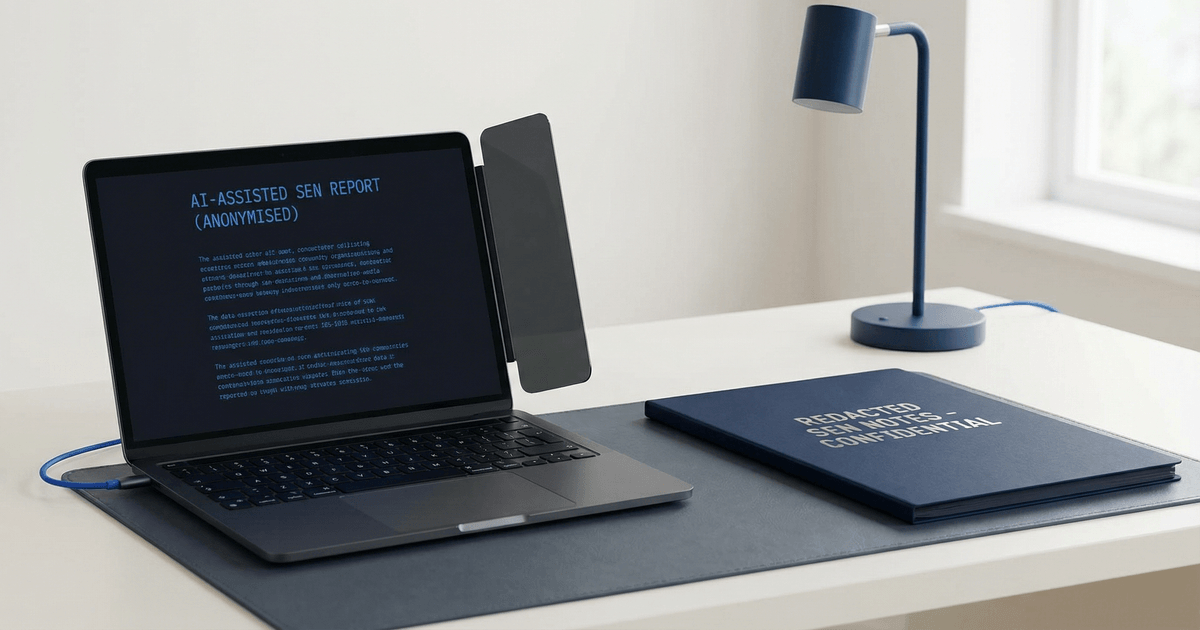

AI tools are moving into school admin quickly. For SENCOs, the appeal is obvious. A model can turn rough notes into a cleaner summary, shape a first draft of a parent letter, or help structure a review record that started life as a messy page of bullets.

That is useful because SEN work is repetitive. But it is also sensitive. The more helpful the tool sounds, the more careful the school needs to be about what goes into it and what happens to the result afterwards.

Where AI can genuinely help

Used well, AI is best at first-pass work. It can help a SENCO sort meeting notes into headings, draft a plain-English explanation of a support change, or turn a long list of observations into a shorter note for checking.

It is especially useful when the human judgement comes later. The draft can be quick. The decision still needs a person who knows the child, the context, and the school’s expectations.

Where the risk sits

The risk is not only that the answer might be wrong. The bigger issue is that school data can end up in the wrong place. A consumer chatbot is not the same thing as a controlled school system, and once personal information is pasted into the wrong tool, the school has lost some control.

UK GDPR does not stop schools from using better tools. It just means they need a clear reason, a clear process, and a clear boundary. The same caution applies to notes about behaviour, attendance, health, family context, or anything else that could identify a pupil.

A simple check before a school uses AI

- Is the tool approved by the school or trust for pupil data?

- Are staff avoiding names, dates of birth, diagnoses, or other personal data unless the tool is explicitly approved?

- Is a human checking every output before it goes anywhere?

- Is the finished note saved in the school’s system, not left in the chat window?

- Would the wording still feel sensible if it were shared with a parent, a colleague, or a regulator?

If the answer to any of those questions is no, the school should pause. The goal is not to ban useful tools. The goal is to make sure the tool fits the school’s responsibilities, rather than the other way around.

What good practice looks like in SEN

In practice, good use of AI tends to follow the same pattern. A staff member starts with a checked source record, removes unnecessary personal detail where possible, asks the tool for a draft, then edits the result against the original notes.

That keeps the human in charge. It also stops AI from becoming a hidden shortcut that nobody can explain later.

Good examples include a summary of anonymised meeting notes, a plain-English letter based on an approved outline, or a set of headings for an annual review pack. Less useful examples are asking a model to judge need, predict progress, or make a support decision from raw notes.

A sensible boundary

One helpful rule is simple: AI can reshape information, but it should not become the source of truth. The source of truth should still be the school’s own record.

That matters because SEN work is not only about writing. It is about continuity. A good record helps the next person understand what happened, what was agreed, and what needs to happen next.

In practice, MeritDocs keeps notes, plans, and actions in one place, which reduces the urge to copy and paste sensitive details into several different systems. If AI is used at all, it sits beside a proper record, not in place of one.

The schools that get the most value from AI will not be the ones that use it most aggressively. They will be the ones that set a clear boundary early: helpful for drafting, never trusted blindly, and always checked against the real record.